Posted on 9/26/2018 in Business and Strategy

The term “robot” is not a synonym for Artificial Intelligence (AI), even though it is sometimes used in that way. A robot is a container for AI, sometimes mimicking the human form, sometimes not—but the AI itself is the computer inside the robot. AI is the brain, and the robot is its body—if it even has a body.

There’s a bit of an Artificial Intelligence-obsession in pop culture. It’s very prevalent in movies and TV shows. If you’re a sci-fi fan like me, you are probably most familiar with the concept of AI from movies like Terminator, Blade Runner and Ex-Machina - and TV shows like Black Mirror, Westworld, and Battlestar Galactica. These tend to depict very advanced concepts of humanoid robots - who are usually trying to kill us.

In reality, AI technology is generally being developed to help us. Artificial Intelligence is defined as the ability of computer systems to perform tasks commonly associated with human beings. Since the development of the computer in the 1940s, humans have been programming computers to carry out complex tasks. The term was coined in 1956 by John McCarthy who described it as the “science and engineering of making intelligent machines.”

AI combines research from a wide variety of fields to:

- Create Expert Systems − The systems which exhibit intelligent behavior, learn, demonstrate, explain, and advice its users.

- Implement Human Intelligence in Machines − Creating systems that understand, think, learn, and behave like humans.

Knowledge engineering is a core part of AI research. It creates rules to apply to data in order to imitate the thought process of a human expert. Machines can act and react like humans only if they have abundant information relating to the world. Initiating common sense, reasoning and problem-solving power in machines is a difficult and tedious task.

Machine Learning

One subset of AI is machine learning - which at its core is the science of getting computers to “act” without being explicitly programmed. This allows computer systems to improve with experience versus simply completing the tasks they are programmed to do.

Machine Learning algorithms are made up of:

Representation: a set of classifiers or language that the computer understands. The landscape of possible models. What the model looks like.

Evaluation: Objectively judging or scoring one model versus another. Distinguish good classifiers from bad ones. Determining preference - differentiating good models from bad ones.

Optimization: Search method - how you search the space of represented models to obtain better evaluations. The strategy of getting where you need to go - the process for finding good models among all possible ones.

Aspects of Intelligence

Research in AI has focused primarily on the following aspects of intelligence:

- Learning - which can be either

- Rote Learning: learning by trial and error - once it finds the solution, it stores it in memory so next time it encounters the same scenario, it recalls the solution.

- Generalization: when AI applies past experience to new situations.

- Reasoning: Drawing inferences appropriate to a situation. Can be deductive or inductive.

- Problem Solving: a systematic search through a range of possible actions in order to reach some predefined goal or solutio

- Special-purpose: meant for a specific problem

- general purpose: can be applied to a wide variety of problems

- Perception: Scanning the environment and identifying separate objects and their relationships in an environment. An example in AI is driverless cars using optical sensors to drive at moderate speeds on an open road.

- Language: Computer programs can be written to respond in a human language to questions and statements. While it cannot truly “understand” language the way that a human can, they may reach the point where their command of language is indistinguishable from that of a human. Natural Language Processing is the “ability of machines to understand and interpret human language the way it is written or spoken”. The objective of NLP is to make computer/machines as intelligent as human beings in understanding language.

Types of Artificial Intelligence

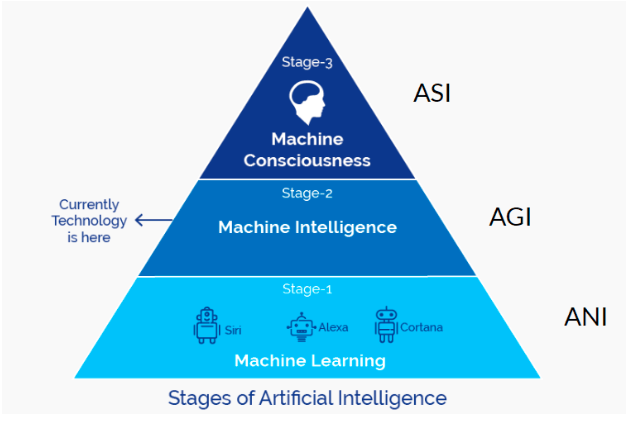

Artificial Intelligence can be categorized in 3 major caliber categories: Artificial Narrow Intelligence (ANI), Artificial General Intelligence (AGI), and Artificial Super Intelligence (ASI).

ANI is most common - AI that is narrowly focused to a single task, operating in a pre-defined range. ANI does not demonstrate self-awareness or true “intelligence.” The nature of its work is repetitive and does not involve decision making. It’s essentially automating a traditionally human activity, and it typically can outperform humans. Things like chatbots or speech recognition software are examples.

AGI refers to AI that possesses the ability to think generally, make decisions without previous exposure or experience, and improve based on their past learnings. Google’s DeepMind AI also taught itself how to excel at Atari video games but we’ve barely scratched the surface here. The goal of Artificial General Intelligence (AGI) is to create a platform that demonstrates Strong (simulates human reasoning) and Broad (generalizes across a broad range of circumstances) intelligence.

We haven’t reached ASI yet but the concept is referring computer systems whose cognitive ability is superior to that of a human.

There is some debate over whether or not we are strictly in the ANI stage of the beginnings of the AGI stage depending on which source you are reviewing. It’s generally believed that while some AI can perform narrow tasks as well, if not better than humans, we are still not at a state where computers are fully intelligent in the broad sense that humans are.

While it may seem like we still have a long way to go before achieving Artificial SuperIntelligence - it’s actually closer than you think. The trajectory of computer performance compared to human performance is accelerating quickly.

AGI will creep up on us quickly due to:

- Exponential growth is intense and what seems like a snail’s pace of advancement can quickly race upwards (like in the chart above)

- When it comes to software, progress can seem slow, but then one epiphany can instantly change the rate of advancement

And once we achieve AGI, computers will be able to reach ASI (AKA: The Singularity) much faster through their own autonomous learning and self-improvement. The future is soon...

Expand Your Digital Marketing Reach

Contact us today for a free consulation

Related Articles

How Do I Optimize My Website for AI?

Why do you need to optimize your website for AI?AI-powered search engines like Google’s AI Overview, Perplexity, and tools such as Microsoft's [...]

Outdated or Outstanding? How to Tell If Your Website Needs a Refresh

Your website is the digital face of your business. It serves as a first impression, a marketing tool, and a resource for potential customers. [...]

Preparing a Website Redesign Budget for 2025: A Step-by-Step Guide

As we approach 2025, businesses are recognizing the necessity of a fresh, user-friendly website to stay competitive in a rapidly evolving digital [...]